Meet Them Where They Are

Shift 7 - People: Train everyone on AI → Enable every person from where they are, with honesty, speed, and trust

Read time: ~10 minutes

This issue draws from 2024-2026 research by BCG, McKinsey, Deloitte, PwC, and Harvard Business School. It’s informed by conversations with executives navigating the same choices, and my own experience leading AI transformation.

70%

That’s how much of your AI value depends on your people. Not the models (10%). Not the technology (20%). The people.1

Most organizations invest overwhelmingly in the 10% and 20%. Then they schedule a prompt workshop and call the people part done.

The result: Of the 92% of organizations investing in AI, only 1% are mature.2

92% of organizations are investing in AI. Only 1% are mature.

The people discipline has two sides. Talent management: who you hire, how roles evolve, what the org chart looks like in two years. Enablement: how you train, what tools you provide, and how you lead people through the change.

This issue is about enablement. Not because talent management doesn’t matter. Because enablement is where most organizations are failing right now.

The standard explanation is skills gaps and resistance to change. Those are real. But there’s a deeper problem most companies miss: they’re trying to solve a distribution problem with a standardized solution.

You don’t just have a training problem. You have a distribution problem.

Your people are at different levels. They need different things. And they won’t move if you’re not honest with them about what’s happening.

The Trust Gap

There’s a trust gap most leaders haven’t closed.

Your CEO said “amplification” in the all-hands. Then said “headcount reduction” in the board deck. Your people weren’t in the board meeting. But they know.

52% of workers worry about AI’s impact on their jobs.3 53% worry that using AI makes them look replaceable.4 Trust in company-provided generative AI fell 31% between May and July 2025. Trust in agentic AI dropped 89% in the same period.5

Your people have watched three years of “AI will empower everyone” while reading headlines about layoffs. They’ve heard “no one is losing their job” from companies that then restructured quietly six months later.

Here’s the thing most leaders won’t say: both statements are true at the same time. AI will amplify those who remain. AND the organization may need fewer people. Pretending only the first part is true destroys trust. Acknowledging both builds it.

That’s the honest conversation. Not “AI will empower everyone” (corporate speak nobody believes). Not “use AI or get fired” (mandate that breeds resentment). The honest version sounds like this: “The world is changing. Some roles will look different. Some will go away. The people who move will be more valuable than ever. Here’s exactly how your role is shifting, and here’s how we support you through it.”

Specificity is what closes the trust gap. “Your role is going to shift from doing X manually to directing AI that does X while you focus on Y” is honest. “AI will empower everyone” is not.

Your role is going to shift from doing X manually to directing AI that does X while you focus on Y.

Where Your People Are

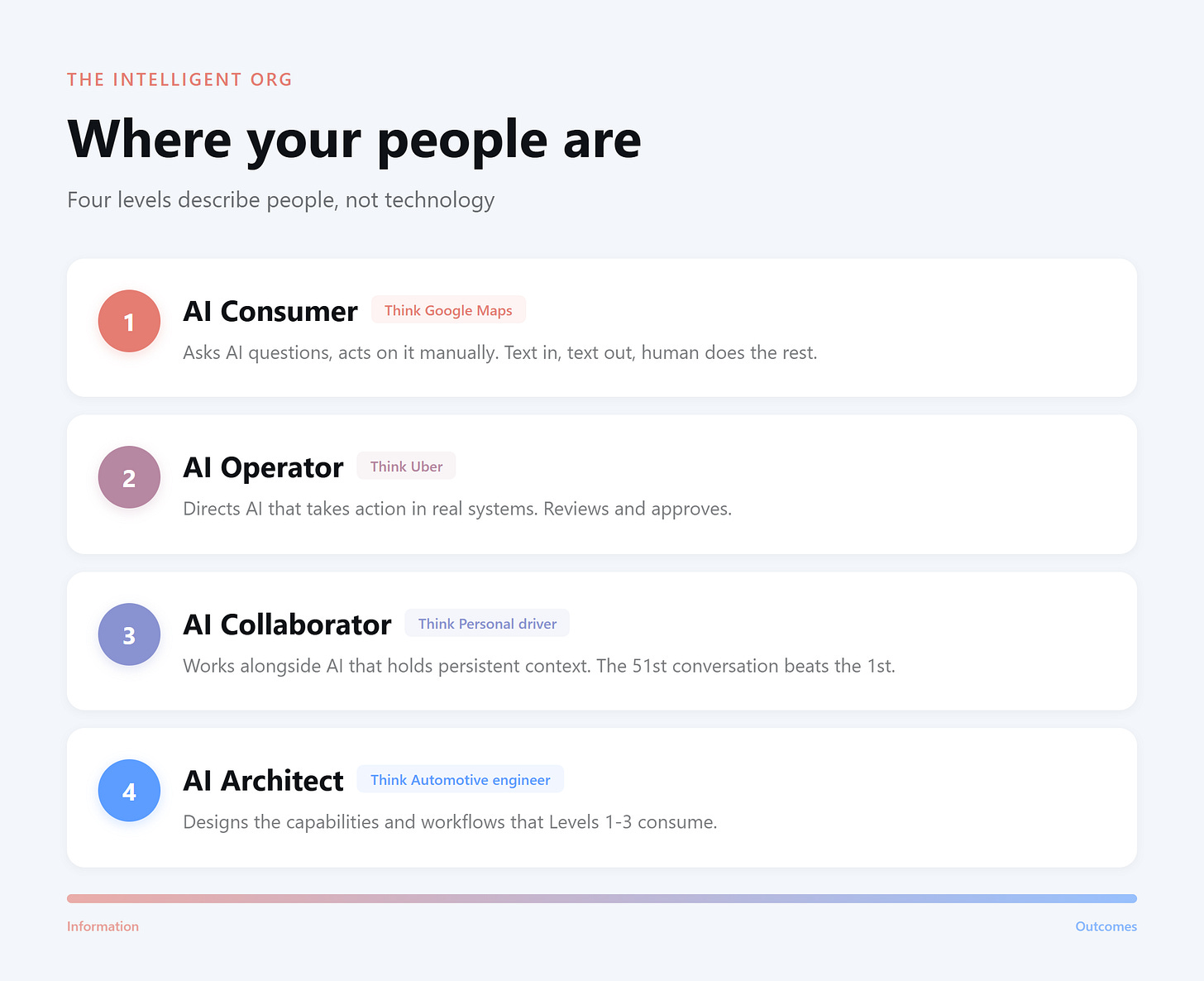

Your organization needs a shared language for this. This is the framework I use.

Level 1: AI Consumer. Asks AI questions, gets information, and acts on it manually. Copies and pastes output into other systems. Text in, text out, human does the rest. Think Google Maps. It gives you turn-by-turn directions. You still drive. Every turn, every merge, every detour. It gave you information. You produced the outcome yourself.

Level 2: AI Operator. Directs AI that takes action in real systems. Reviews and approves. The AI creates the project, updates the CRM, drafts and sends the email. The human isn’t doing the work. They’re directing it. Think Uber. You tell the driver where to go. They drive. You sit in the back, check your email, and redirect if plans change. You’re directing and approving, not driving.

Level 3: AI Collaborator. Works alongside AI that holds persistent context. The AI knows the work. Not just today’s task, but the project history, the client relationship, the patterns from last quarter. The 51st conversation is dramatically more valuable than the first because of everything that came before it. Think personal driver. It’s Tuesday at 7:30 AM. You get in the car. The driver already knows: Starbucks first, then the office. They’re not just executing commands. They’re applying accumulated context to anticipate what you need.

Level 4: AI Architect. Designs the capabilities and workflows that Levels 1-3 consume. Builds what others use without knowing how it works under the hood. Think automotive engineer. They designed the car, the navigation, and the sensors. The Uber rider doesn’t think about the transmission. The personal driver doesn’t worry about how GPS works. But none of it works without the engineer who built it.

These describe people, not technology. A Level 1 person using a Level 3 tool is still at Level 1.

The most important jump is from Level 1 to Level 2. That’s where AI shifts from producing information to producing outcomes.

Why The Standardized Program Fails Everyone

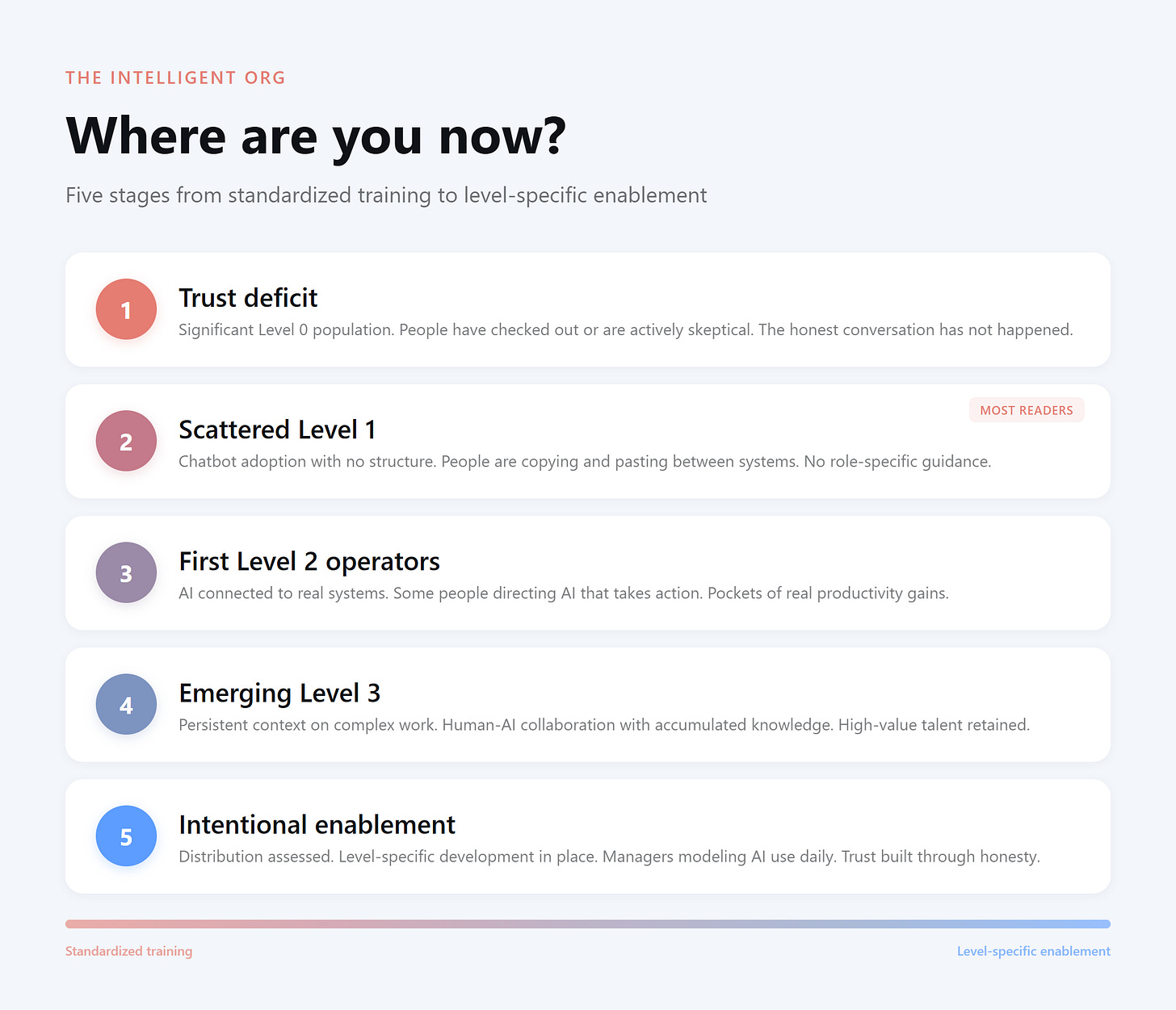

Most companies focus on getting everyone from Level 0 (not yet using AI) to Level 1. Run a prompt workshop. Celebrate adoption metrics.

Here’s what’s actually happening:

Your Level 0 people still don’t trust you. A chatbot workshop didn’t address why. Your Level 1 people got the workshop, but no guidance specific to their role. They still don’t see how this changes their actual work. Your Level 2 people are bored. They outgrew prompting six months ago, and nothing connects to their systems. Your Level 3 people? They’re sitting through a workshop on how to write a good prompt while they prompt AI to find them a new job on their phone under the table.

A standardized AI program doesn’t fail quietly. It tells your most capable people that the organization doesn’t understand what’s possible.

And in a market where AI skills carry a 56% wage premium,6 those people have options.

AI skills carry a 56% wage premium.

The talent risk runs both directions. You’re underserving the bottom of the ladder, so they never climb. You’re insulting the top, so they leave.

You converge on a workforce stuck at Level 1.

What Each Level Actually Needs

I hit this wall recently. A team I was supporting had a mix of Level 1, Level 2, and a couple of Level 3 people. They asked me what tooling to enable across the team.

I wanted to recommend the Level 3 tool. It was the right answer for where AI is heading. But I looked at their team and knew: most of them weren’t ready for it. Half the team would open it once, get overwhelmed, and never come back.

The Level 1 tool they already had wasn’t getting the job done either. People were copying and pasting between systems, doing manually what AI should have been handling. They’d outgrown it but didn’t know what came next.

I kept going back and forth. One tool for everyone. That’s how you do it, right? Pick a platform, roll it out, train the team.

That’s when it hit me. The question wasn’t “what tool does this team need?” The question was “What does each person on this team need?” The Level 1 people needed a connection to their actual systems. The Level 2 people needed more autonomy and fewer guardrails. The Level 3 people needed persistent context and the freedom to build their own workflows.

One team. Three different answers.

Level 0 to Level 1: Show them what’s possible

Don’t start with training. Start with exposure.

Most people’s first experience with AI is the chatbot. They ask a question, get a mediocre answer they could have found themselves, and go back to doing things their way. That experience confirmed what they already suspected.

The gap goes deeper than willingness. Manal Iskander trains faculty at California universities on AI implementation. “They can’t understand even the word orchestration,” she told me. “Terms like data governance or workflow automation go right over their heads.” She estimates 75-80% of cybersecurity students, the people you’d expect to be early adopters, have “no clue.” The gap is vocabulary, and no prompt workshop is going to fix it.

Let people see what Level 2 and 3 look like. Not a demo. The real thing. Show them what happens when AI takes action in real systems and holds context across weeks of work. That’s what changes minds. Not a slide deck about prompt engineering.

The manager matters most here. BCG found that 88% of managers in future-built companies model AI use on a daily basis. At laggards, only 25%.7 People follow what their manager does, not what the company emails them about.

Be honest about what’s changing. Name the identity shifts ahead: “Your value is about to move from execution to judgment and taste. That’s uncomfortable. Here’s how we support you.”

Don’t force adoption. Create the conditions where it’s safe to try. But don’t pretend the world isn’t changing.

Level 1 to Level 2: The big jump

This is the highest-ROI investment in the entire people discipline. This is where AI stops producing information and starts producing outcomes.

The skill shift is fundamental. Not “how to prompt” but “how to direct and verify.” You learn to define the outcome you want rather than prescribing the steps. You learn to review AI work: what to check, what to trust, where errors hide.

This matters because AI has what Harvard researchers call a “jagged frontier.” On tasks within its capabilities, consultants using AI were over 25% faster and produced over 40% higher quality work. On tasks outside its capability, they performed 19 percentage points worse.8 Knowing where AI works and where it doesn’t is the critical Level 2 skill.

The tools matter as much as the skills. AI needs to connect to the systems where work happens. CRM, project management, file storage, and email. If AI can’t take action where the work lives, the person stays at Level 1, no matter how skilled they are.

And then there’s the identity shift. This is the part nobody talks about in the training program. Level 2 means going from doer to director. For people who built their careers on being fast and accurate with their hands, their value now moves to their judgment. You’re rethinking what makes you good at your job.

Rethink what makes you good at your job

Level 2 to Level 3: The collaboration

Level 3 is where the work changes fundamentally. You’re not using a tool anymore. The closest human analogy: working with a colleague who’s been on your team for six months. They know the project history, the client preferences, and what you tried last quarter that didn’t work. You don’t start from zero. You pick up where you left off.

The skills at this level are different. Context engineering: structuring the information environment your AI works within. Design thinking: breaking complex work into components that a human-AI pair can execute. Quality judgment at scale: knowing when to trust AI’s patterns and when to override.

The tools are emerging, but real. Persistent workspaces that hold context, learn from interactions, and evolve over time.

Not all work needs Level 3. Transactional work is perfectly served at Level 2. Level 3 is for complex, judgment-heavy, context-dependent work. The work done by the people you can least afford to lose.

Level 4: The architect

Most organizations won’t build this internally. They partner for it.

The executive question: “How do we access Level 4 capability?” We’ll go deeper in a future issue.

Where Are Your People?

One question to take into Monday morning:

“Where are our people, and where do we need them to be?”

Walk through your organization. Assess honestly:

What percentage is at Level 0? That tells you something about your organization’s honesty and the experience you’re offering.

What percentage is at Level 1? That’s your enablement gap. They need a connection to real systems and role-specific guidance.

Who’s at Level 2? That’s your emerging capability. Are you investing in them or leaving them to figure it out?

Who’s at Level 3? That’s your highest-value talent. Are you supporting them or losing them?

Where’s Level 4 coming from? Build or partner?

Then the strategic question: where does your strategy require people to be?

If your strategy needs 40% of people at Level 2 within twelve months (Issue 1), that becomes a resource allocation conversation (Issue 3), which requires the right governance (Issue 4), designed into pods (Issue 5), operating as a system (Issue 6), enabled through honest, level-specific development (Issue 7).

All seven disciplines. Connected.

The Choice

70% of AI value comes from the people component.9 That 70% depends on three things happening at the same time: being honest about what’s changing, meeting each person where they are, and moving with urgency.

Not sequence. Not “build trust, then enable.”

Trust IS what happens when you meet people where they are, when you’re honest about the identity shifts. When you invest in level-specific development instead of a generic workshop.

Enablement done right is trust-building.

You can have the best strategy. The right ownership model. A clear investment thesis. Governance built into the architecture. Beautifully designed human+AI pods. A system that’s ready to scale.

None of it works without people who are enabled, moving, and willing to go on this journey with you.

The Intelligent Org: Seven disciplines. Seven foundational shifts.

Strategy. AI isn’t the strategy. It advances the strategy.

Ownership. Business leaders own AI. Technologists enable it.

Value. Every initiative has an investment thesis.

Governance. Built into the architecture, not bolted on.

Design. Amplify humans, don’t automate them out.

Operations. Systems, not heroes.

People. Meet them where they are with honesty, speed, and trust.

Each discipline supports the others. Skip any, and the system breaks. But if one undergirds the rest, if one determines whether everything else succeeds or fails, it’s this one.

These seven disciplines are the foundation. Next, we apply them: pods, amplification chains, value chains. The full architecture of the intelligent, amplified organization.

But architecture without enabled, trusting people is just a blueprint that never gets built.

The companies that win won’t have the best AI. They’ll have the best amplified humans. That starts with one commitment: be honest about what’s changing, meet every person where they are, and move.

Apply this framework to your company: ChatGPT | Claude | Perplexity

What’s next

Season 1 is complete. In Season 2, we build: pods, amplification chains, and the full architecture of the intelligent organization. Subscribe so you don’t miss it.

Julie Bedard and Vinciane Beauchene, “AI Transformation Is a Workforce Transformation,” Boston Consulting Group, February 4, 2026.

Hannah Mayer, Lareina Yee, Michael Chui, and Roger Roberts, “Superagency in the Workplace,” McKinsey & Company, January 2025.

Pew Research Center, 2024 survey. As compiled in SHRM, “How to Engage Employees in AI Without Triggering Fear,” August 2025.

Microsoft and LinkedIn, “2024 Work Trend Index,” 2024.

Ashley Reichheld et al., “Workers Don’t Trust AI. Here’s How Companies Can Change That,” Harvard Business Review, November 7, 2025.

PwC, “Global Workforce Hopes and Fears 2025,” November 2025.

Julie Bedard and Vinciane Beauchene, “AI Transformation Is a Workforce Transformation,” Boston Consulting Group, February 4, 2026.

Fabrizio Dell’Acqua et al., “Navigating the Jagged Technological Frontier,” Harvard Business School Working Paper 24-013, September 2023.

Julie Bedard and Vinciane Beauchene, “AI Transformation Is a Workforce Transformation,” Boston Consulting Group, February 4, 2026.