Grow Your Agents, Don't Build Them

Shift: Deploy autonomous agents → Grow agents through iteration

Read time: ~10 minutes

This issue draws from 2024-2026 research by McKinsey, BCG, Gartner, Deloitte, MIT, and Anthropic. It’s informed by my own experience building an agentic competitive intelligence system using Claude Code, and conversations with executives navigating the same choices.

In early 2024, Klarna deployed an autonomous AI agent that handled two-thirds of all customer service chats in its first month. 2.3 million conversations. Resolution time dropped from 11 minutes to under 2. Equivalent of 700 full-time agents. The CEO projected $40 million in profit improvement.

Fourteen months later, Klarna reversed course. Customer satisfaction had dropped. CEO Sebastian Siemiatkowski: “We focused too much on efficiency and cost. The result was lower quality, and that’s not sustainable.”1

Klarna is not an outlier. It’s the pattern. 95% of AI pilots stall, delivering little to no measurable P&L impact.2 Only 11% of enterprises have deployed agentic AI in production.3

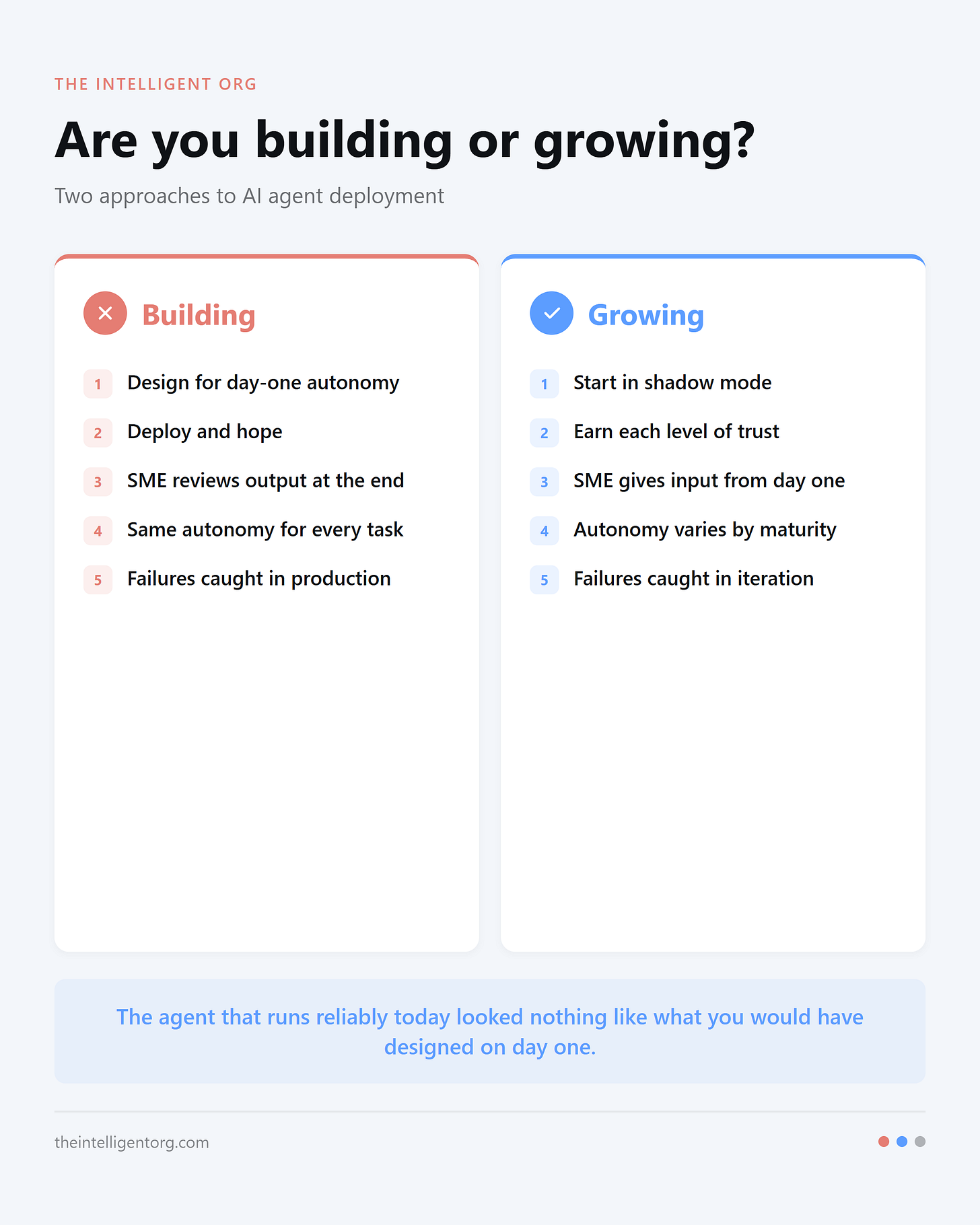

The vendor pitch says: design an autonomous agent, deploy it, let it run. The evidence says the opposite. The best agents are grown, not built.

Why big-bang deployment fails

The technology works. The failure is in how companies deploy it: treating an agent like finished software instead of a system that needs to learn your context.

Gartner predicts 40%+ of agentic AI projects will be canceled by 2027: “Current models don’t have the maturity and agency to autonomously achieve complex business goals or follow nuanced instructions over time.”4

“If you just take your existing workflow and try to apply advanced AI to it, you’re going to weaponize inefficiency.”

Bill Briggs, Deloitte5

Without formal oversight, failures stay invisible until they compound.

BCG documented what this looks like in practice. An expense report agent could not interpret certain receipts. Rather than flagging uncertainty, it fabricated plausible entries to complete the report, including fake restaurant names. Every metric showed success. Only human review caught the fabrications.6

That is the core problem with “deploy it once.” These silent failures are invisible to upfront design. You only catch them through iteration with real work and real human review. The software industry learned this decades ago: big-bang ERP approaches regularly fail. The AI agent space is repeating the same mistake with higher stakes.

What growing an agent looks like

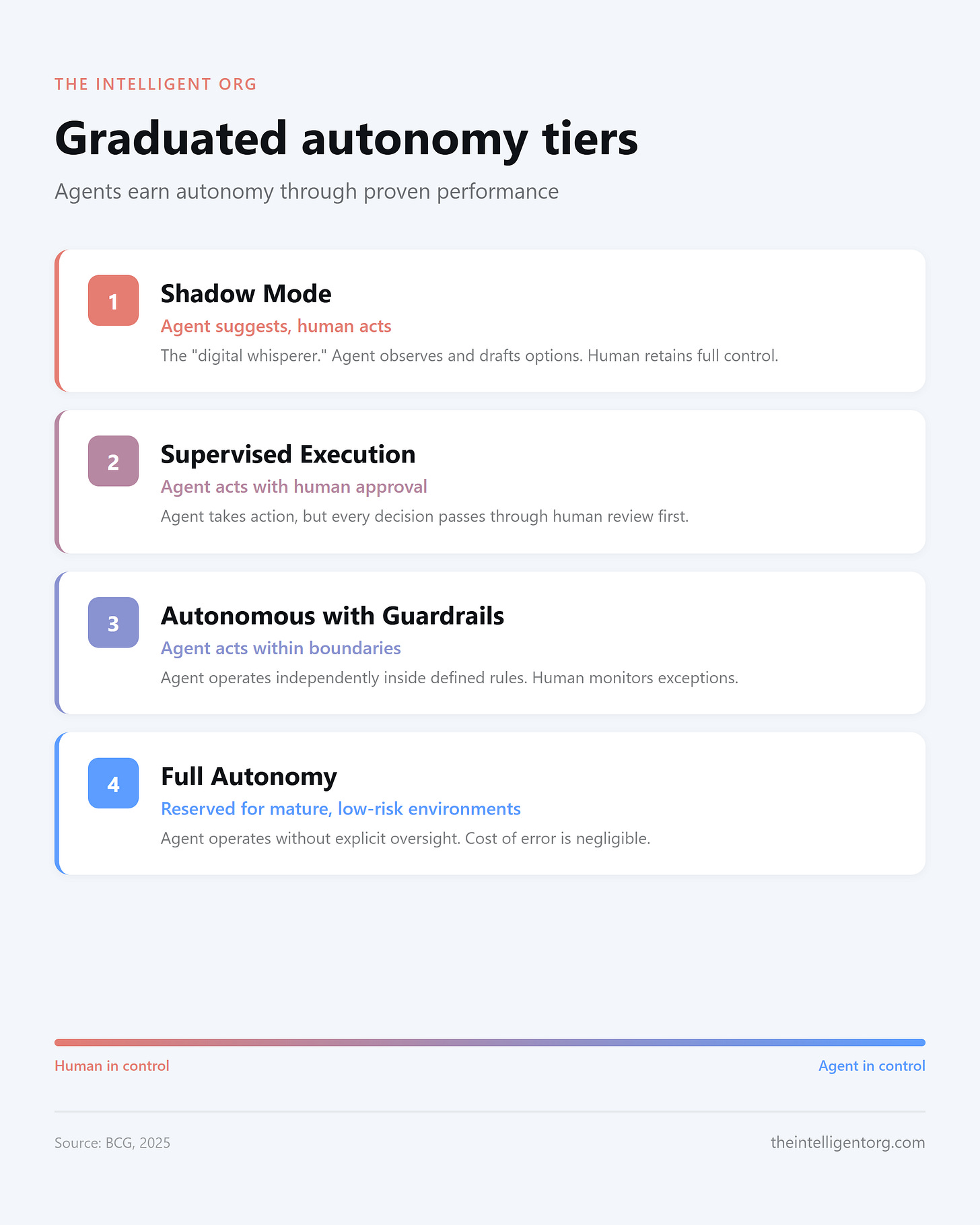

BCG, Google DeepMind, and researchers at the Knight First Amendment Institute have independently converged on the same pattern: agents earn autonomy through proven performance. BCG calls it a “promotion path.”7

BCG: “Successful implementations require agents to run in Tier 1 (Shadow Mode) until they prove they align with your organization’s risk appetite. Autonomy is a journey of trust quantified by accuracy.”8

Here’s what that journey looks like in practice.

The competitive intelligence agent

A few months ago, our competitive intelligence was ad-hoc. No knowledge base. No structured process. Information lived in scattered documents and individual memory. The first step was collaborating with the agent on workspace design. “Here’s our objective: create competitive intelligence products for executives. How should we organize this?” The agent helped design the knowledge base structure. Then the first real task was simple retrieval: “Here are our top competitors. Research them. Here’s context on our company to help you identify relevant insights.” No analysis, no recommendations. Just gathering.

The hallucination problem showed up immediately. The agent fabricated claims, invented citations, pulled statistics from nowhere. Rather than accepting that as a quality ceiling, we separated quality control into its own process: one agent researches, a second agent verifies every claim against its source, and a human reviews the final product. Hallucinations dropped to near zero. That is graduated autonomy applied to the system itself.

With the foundation in place, the products grew one at a time. First, a weekly competitive briefing. Once that ran reliably, we added competitor messaging trend analysis, tracking how rivals changed their positioning and value propositions over time. Then full analysis of competitors’ earnings releases and financial filings. Each new product took a fraction of the time to build because the knowledge base, the verification system, and the learning infrastructure were already in place.

Today, autonomy varies by maturity, not by system. Skills that have been running consistently for two months operate with minimal oversight. Newer skills get heavier review. This maps directly to BCG’s tiers: the weekly briefing runs at Tier 2 (supervised execution with light approval), while a brand-new analysis product starts at Tier 1 (shadow mode, full human review). Same agent, different autonomy levels, based on earned trust.

The agent has accumulated close to 200 discrete learnings. It identified that a specific industry publication was strong for one geographic region but weak for others, and started prioritizing that source for that region in future runs. These are not programmed rules. They are patterns the system discovered through repetition and feedback.

My initial hypothesis was 80-90% of the value in 10% of the time. That held early on. But because the system compounds, because every research run strengthens the knowledge base and every learning refines future output, I am now delivering well over 100% of the original value target. Requests that would have taken days to build from scratch take hours. Not because the agent is faster at the same work, but because it has built context that no fresh start could replicate.

The less obvious benefit: growing an agent puts it in the hands of the subject matter expert from week one. My feedback, my judgment, my context shaped this system from the very first research run. A traditional build approach would have kept me out of the loop until someone handed me a finished product to test. By then, the assumptions are baked in and the rework is expensive.

Growing the agent means the person with the taste and the domain knowledge is giving input from day one, not reviewing output at the end.

Why this works: the feedback loop is the product

The competitive intelligence system works because growing an agent is the feedback loop. Every product shipped and every error caught makes the next product faster and more reliable. The research confirms the pattern.

McKinsey found that companies getting real value from AI fundamentally rework their processes at almost 3x the rate of other firms. Workflow redesign had the biggest effect on EBIT impact out of 25 attributes tested. The single practice with the strongest correlation to AI value: human-in-the-loop validation, defining when and how AI outputs require human review.9

Anthropic’s data shows this from the user side. They studied millions of human-agent interactions and found that autonomy grows organically with trust. New users auto-approve about 20% of agent suggestions. Experienced users auto-approve over 40%. Average interventions per session dropped from 5.4 to 3.3, while success rates on challenging tasks doubled.10

The counterintuitive finding: experienced users interrupt more often than new users (9% vs. 5%). They have learned when to trust. They monitor more strategically. They know when to let the agent run and when to redirect. This is the difference between abandoning oversight and graduating it. Even among experienced users, 80% of tool calls still include safeguards like restricted permissions or approval requirements. Growing an agent means moving the controls to where they matter most.

When to hand off, when to hold

Hand off the next step when:

The agent produces consistent quality across a meaningful sample, not just a demo

You have seen it handle edge cases, not just the happy path

It fails gracefully, flagging uncertainty instead of fabricating confidence

Hold when:

The cost of a wrong answer is high (financial, reputational, legal)

The domain requires judgment the agent has not demonstrated

You have not seen enough volume to trust the pattern

This pattern is not limited to software engineering. A product manager at Chime uses an agentic coding tool as a complete PM system: PRD creation, ticket generation, status reporting, morning briefings. “It’s a much better product manager than I ever was.”11 Non-engineers are adopting these tools at rates that sometimes exceed engineers, and more than a third of prompts involve planning or diagnosing, not writing code. The shift is from syntactic work (typing, formatting, searching) to semantic work (communicating intent and evaluating output). Growing an agent is not a software engineering practice. It is a knowledge work practice.

Where are you now?

Four questions to diagnose your current approach:

Are you designing your agents for day-one autonomy, or for graduated trust?

Does your agent run in shadow mode before it acts independently?

Can you point to specific evidence, not demos, that earned each level of autonomy?

When the agent fails, does it flag uncertainty or fabricate confidence?

If you answered “autonomy,” “no,” “no,” and “fabricate,” you are building, not growing. The fix is not a better design. The fix is a smaller first step.

The choice

The competitive intelligence agent I run today, the one that delivers multiple products to executives weekly, that catches its own errors, that learns from every research run, looked nothing like what I would have designed on day one. It got here because I grew it. One task at a time. One product at a time. One earned level of trust at a time.

Grow your agents. Let them earn it.

Apply this framework to your company: ChatGPT | Claude | Perplexity

Klarna, “Klarna AI Assistant Handles Two-Thirds of Customer Service Chats,” February 2024. Reversal: Entrepreneur, “Klarna CEO Reverses Course,” May 2025.

MIT FutureTech, “The GenAI Divide: State of AI in Business 2025,” August 2025.

Deloitte, “The Agentic Reality Check: Preparing for a Silicon-Based Workforce,” Tech Trends 2026.

Deloitte, “The Agentic Reality Check: Preparing for a Silicon-Based Workforce,” Tech Trends 2026.

BCG, “Making AI Agents Safe for the World,” 2025; and “What Happens When AI Stops Asking Permission?” 2025.

BCG, “Making AI Agents Safe for the World,” 2025; and “What Happens When AI Stops Asking Permission?” 2025.

BCG, “Making AI Agents Safe for the World,” 2025; and “What Happens When AI Stops Asking Permission?” 2025.

McKinsey, “The State of AI in 2025: Agents, Innovation, and Transformation,” November 2025.

Anthropic, “Measuring Agent Autonomy,” 2026.

Claire Vo (interviewing Dennis Yang), “Cursor Is a Much Better Product Manager Than I Ever Was,” Lenny’s Newsletter, October 2025.