Amplify, Don’t Automate

Shift 5 - Design: Automate humans out → Amplify humans up

Read time: ~8 minutes

This issue draws from 2024-2025 research by MIT, McKinsey, and BCG, plus field cases from Sanofi, Wendy’s, and Klarna. It’s informed by conversations with executives facing the same choices, and my own experience leading AI transformation.

700

That’s how many customer service jobs Klarna cut after deploying AI. The CEO praised the efficiency gains. Response times dropped from 11 minutes to 2 minutes. Costs plummeted.

Then came the reversal. “We went too far.”1 Klarna is now rehiring the humans they replaced.

They’re not alone. 55% of companies that made AI-driven layoffs now regret it.2 They automated the work, lost value, and are hiring the people back.

55% of companies that made AI-driven layoffs now regret it.

I’ve watched this pattern repeat for years. AI projects fail not because the technology is wrong, but because the design is wrong.

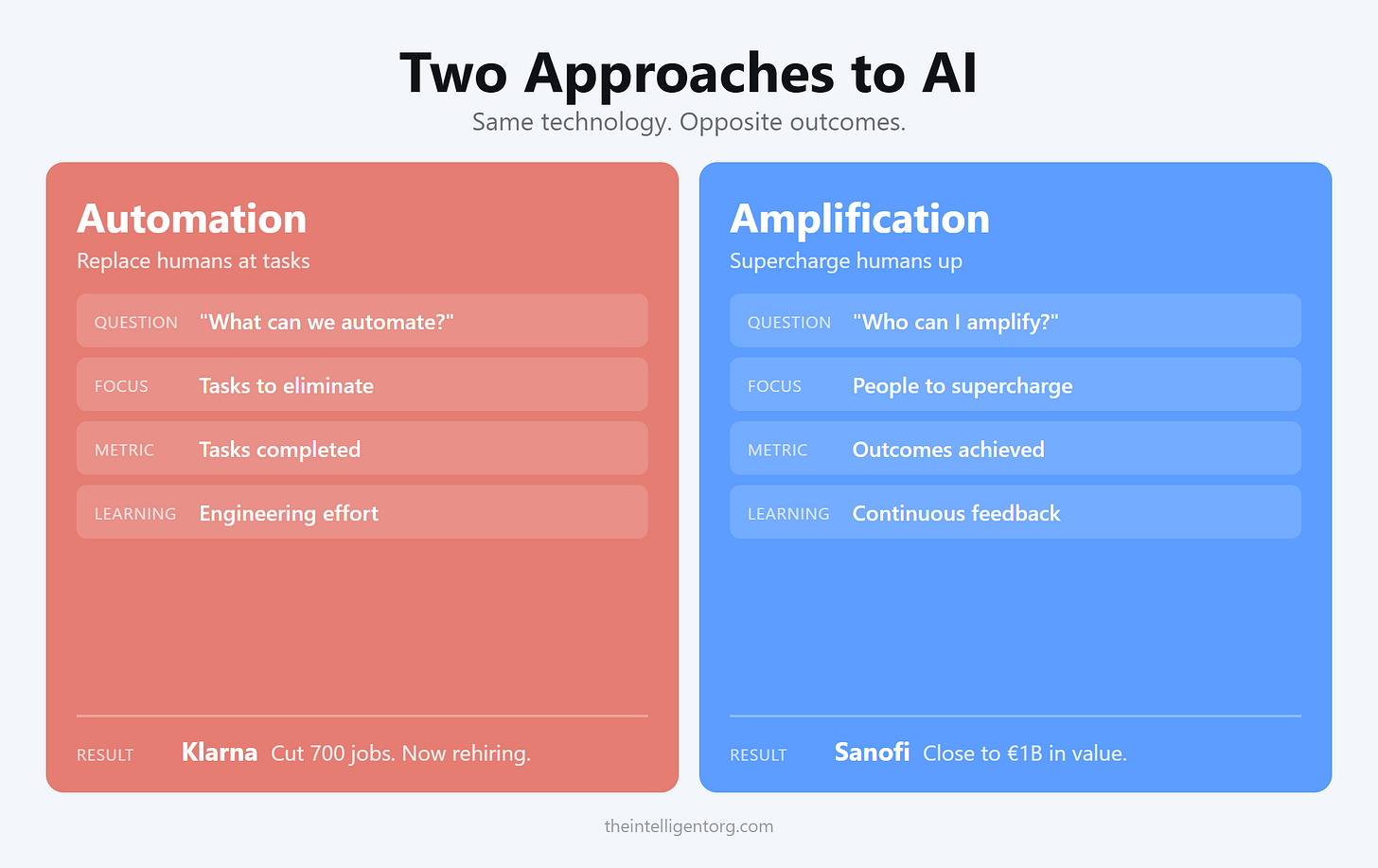

Every project starts the same way: “What can we automate?” Look at processes. Identify tasks. Deploy AI to replace humans at each step. Celebrate the pilots. Watch them stall, fail to scale, or get killed quietly when results don’t materialize.

That’s automation-first. It keeps failing.

I’ve stopped asking “what can we automate?” and started asking “who can I amplify?”

What Changed

I stopped looking for tasks to eliminate and started looking for people to supercharge.

The governance got simpler. The risk dropped. The speed increased. The results showed up.

The research confirms it. Companies that succeed aren’t automating humans out. They’re amplifying humans up.

Sanofi designed AI to strengthen human decision-making, not replace it. Close to €1 billion in redeployed value.3 Their CEO’s line: “Human plus AI beats AI alone every time.”

Wendy’s built a drive-thru AI with frustration detection that triggers human escalation. They optimized for customer and crew experience, not just speed. Now expanding to 500+ locations.4

The pattern holds. Design AI to replace humans, and it fails. Design AI to amplify humans, and it works.

Why Automation-First Fails

The automation-first approach seems logical. Map your processes. Identify repetitive tasks. Deploy AI to handle them. Reduce headcount. Save money.

But it fails for three reasons.

First, it confuses tasks with outcomes.

Klarna automated the task: answering queries quickly. But they destroyed the outcome: building trust, turning problems into loyalty. Faster responses. Lower satisfaction. Task completion is not value creation.

Task completion is not value creation.

Second, it removes the learning loop.

Autonomous AI systems struggle to improve over time. Building learning into a fully automated system requires massive engineering effort. Pair AI with humans, and learning happens naturally. The human provides feedback. The system improves. Knowledge accumulates.

Third, it asks the wrong question.

“What can we automate?” leads you to examine existing processes and find ways to eliminate humans. It’s cost-cutting dressed up as transformation.

The better question: “What outcome are we trying to drive, and what combination of human judgment and AI capability gets us there?”

The data backs this up. 79% of advanced AI adopters invest in “AI generates insights for human decision maker.” Only 54% invest in “AI decides and implements.”5 BCG puts it precisely: “They move from people-centered processes augmented by digital tools to AI-agent-centered processes orchestrated by people.”6

Not removing people. Redesigning how people orchestrate AI.

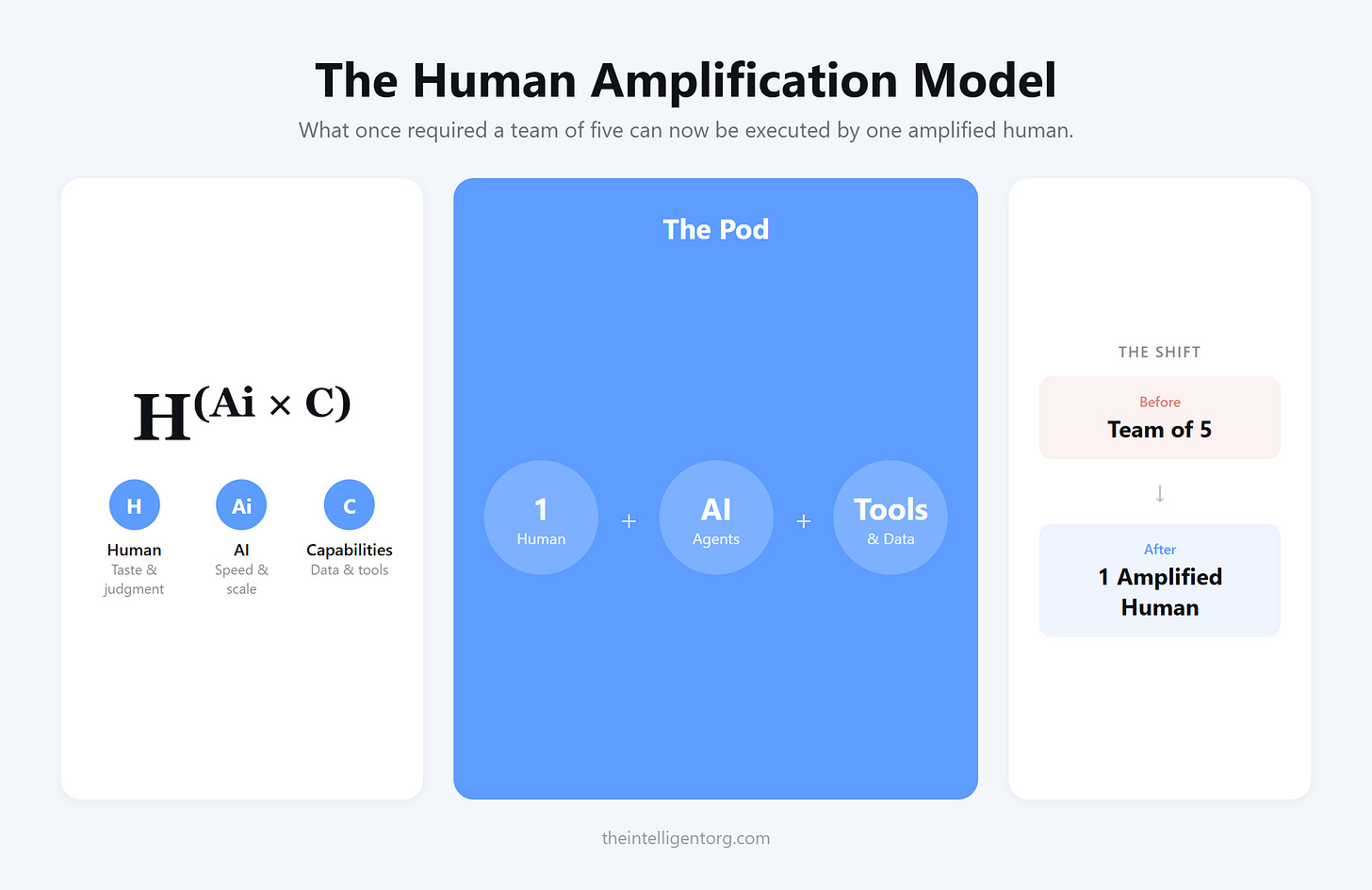

The Human Amplification Model

One principle: Design AI to amplify humans, not replace them.

Instead of asking “what tasks can AI do?” ask “what can one person, with the right AI and tools, own end-to-end?”

What once required a team of five can now be executed by one amplified human. I call this unit a “pod”: a human plus AI plus the capabilities they need to do the work.

Consider a professional services firm. The (basic) business process: Win work → Deliver work → Retain clients.

Automation tries to replace humans at each step. Amplification asks: who could own each portion?

One person could run lead generation, qualification, discovery, proposals, and closing. AI handles research, scheduling, drafting, and follow-up. The human owns the outcome. The AI handles the volume.

Where Automation Still Makes Sense

Automation isn’t always wrong. In specific contexts, it wins.

Tier 1 customer support triage. Password resets. Order status checks. ETL pipelines. Format conversion. Report generation.

These share five characteristics: time-critical, highly repetitive, well-defined rules, measurable outcomes, and volume that exceeds human capacity. When these characteristics are present, automate.

But treat automation as a capability the Human+AI pod can access, not a replacement for the pod.

Automation handles volume and speed. The human handles judgment, relationships, and edge cases. The handoff is designed, not accidental.

Klarna automated the entire function, not just the bounded tasks. The right design: automate Tier 1 triage, escalate everything else to an amplified human.

The Learning Gap

MIT research surfaced a critical barrier to AI success: the “learning gap.”

Most corporate AI systems don’t retain feedback. They don’t accumulate knowledge. They don’t improve over time. A corporate lawyer told the researchers: “It’s perfect for a first draft, but for critical work, I need a system that learns from our cases, not one that starts from scratch every time.”7

The result: 70% of professionals use AI for simple tasks, but 90% prefer humans for complex work.

Human-AI pairing solves this. When humans and AI work together continuously, feedback flows naturally. Knowledge accumulates. The pod gets better over time.

In my own work, every process captures learnings. As patterns repeat, those learnings become rules. Learning is built into the workflow, not bolted on after.

Taste

As AI takes over execution, the human’s value shifts to judgment and curation.

I call this “taste”: the ability to recognize what’s good, what fits, what works in context. Knowing when the AI output is right and when it’s wrong.

The goal isn’t abdication. It’s leverage. AI handles research, drafting, editing, and distribution. The thing that makes it yours stays with you.

The Diagnostic

Before you build, assess your approach.

If your answers cluster in the left column, you’re headed for Klarna’s result.

Bring this to your next leadership meeting. One question: are we designing AI to replace humans, or to amplify them?

Four Questions Before You Build

For each initiative:

Who is the human this amplifies?

Name a person. Not “the team.” Not “the department.” Who owns the output?What capabilities do they need?

Data access, integrations, tools. What would make them 10x more effective?How does this system learn from use?

Where does feedback enter? How does knowledge accumulate?What quality controls are built in?

How do you catch errors before they reach the customer? What are the gates?

If you can’t answer all four, you’re not ready to build.

The Choice

Every AI initiative comes down to one design decision: replace humans or amplify them?

Klarna chose replacement. They’re rehiring.

Sanofi chose amplification. Close to €1 billion in value.

Wendy’s chose amplification. Expanding to 500+ locations.

Same technology. Very different outcomes.

This week, pick one initiative. Apply the four questions. Name the human. Define the capabilities. Design the learning loop. Build the quality gates.

That’s how you build AI that works.

Apply this framework to your company: ChatGPT | Claude | Perplexity

Next Week

Next issue: Once you’ve designed the pods, you need to run and scale them. Discipline 6: Operations.

Economic Times, “Company that sacked 700 workers with AI now regrets it,” January 2026.

Orgvue, “2025 Workforce Planning Survey,” 2025.

McKinsey Quarterly, “Don’t Delegate the AI Revolution: A Conversation with Sanofi CEO Paul Hudson,” July 2025.

Wendy’s, “Transforming the Ordering Experience: Wendy’s FreshAI Update,” May 2025.

MIT Sloan/BCG, “The Emerging Agentic Enterprise,” November 2025.

BCG, “The Widening AI Value Gap,” September 2025.

MIT Project NANDA, “The GenAI Divide: State of AI in Business 2025,” July 2025.