AI Governance by Design

Shift 4 - Governance: Governance is a committee → Governance by design, everyone's job

Read time: ~12 minutes

This issue draws from 2024-2025 research by EY, Deloitte, McKinsey, and MIT Sloan. It's informed by conversations with executives navigating the same choices, and my own experience leading AI transformation.

The Terminology Problem

Ask five executives what “AI governance” means. You’ll get five different answers.

One thinks it’s about data privacy. Another thinks it’s compliance with EU regulations. A third imagines a committee that approves AI projects. The fourth is worried about vendors training models on company data. The fifth has no idea but knows the board keeps asking about it.

This confusion is why governance conversations go nowhere. Everyone is talking past each other because they’re using the same word to mean different things.

Only 12% of C-suite executives can correctly identify appropriate controls for AI risks, according to EY’s 2025 survey of 975 leaders.1 Chief Risk Officers scored below average at 11%. Deloitte found that 66% of boards still have “limited to no knowledge or experience” with AI.2 The people responsible for governance can’t describe how it works.

Only 12% of C-suite executives can correctly identify appropriate controls for AI risks.

Before we can fix AI governance, we need to name what we’re actually talking about.

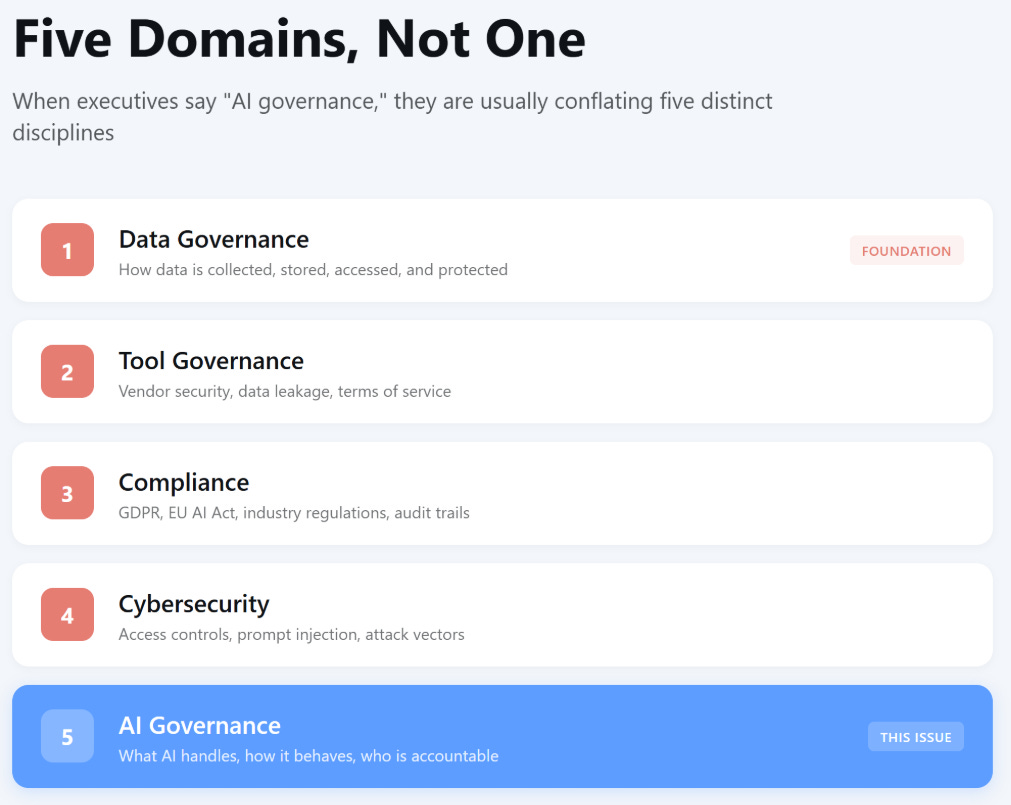

Five Domains, Not One

When executives say “AI governance,” they’re usually conflating five distinct disciplines. Each has different owners, different expertise, and different solutions.

Data governance is the foundation. How is data collected, stored, accessed, and protected? Who can access what, and under what conditions? What are the quality standards? What is the lineage?

This discipline predates AI by decades. It’s the prerequisite. If your data governance is broken, AI governance cannot succeed. You’re building on sand.

Data governance is not the focus of this issue. But if you don’t have it, stop here and fix it first.

Tool governance is about the vendors and platforms you use. Are your AI tools leaking company data? Are vendors using your data to train their models? What are their security practices? What do the terms of service actually say?

This matters because most companies are buying AI capabilities rather than building them. Menlo Ventures found that 76% of enterprise AI is now purchased versus built, a reversal from 47/53 just a year ago.3 The tools you choose create exposure before you write a single prompt.

We’ll cover tool governance in depth in a future issue. For now, know that it’s distinct from the other four.

Compliance is the regulatory layer. GDPR. CCPA. The EU AI Act. Colorado’s AI Act. Industry-specific rules in healthcare and financial services. Audit trails. Documentation. This is what legal teams typically focus on when they hear “AI governance.”

Compliance is necessary. It’s also insufficient. You can be fully compliant and still have ungovernable AI systems.

Cybersecurity is about protecting AI systems from threats. Access controls. Identity management. Integration security. Prompt injection defense. The OWASP Top 10 for AI Agents identifies threats such as authorization hijacking, critical system interaction, and knowledge base poisoning.4

When AI systems have access to sensitive data and the ability to take actions, they become attack vectors. A compromised AI agent with access to your CRM, email, and calendar is not just an AI problem. It’s a security problem.

AI governance is the focus of this issue. It answers three questions:

What should AI handle, and what must it never touch?

What controls ensure AI behaves as intended over time?

Who is accountable for AI outputs?

AI governance is about the decisions you make when designing, deploying, and operating AI in your specific context.

Why does this distinction matter?

Different domains require different owners. Data governance is the responsibility of IT and data teams. Tool governance involves security, legal, and procurement. Compliance is legal’s domain. Cybersecurity belongs to your CISO. AI governance requires business leadership.

Conflating them creates governance theater: one committee trying to handle all five domains, doing none of them well.

One AI governance committee trying to handle all five domains will do none of them well.

You need all five. But this issue focuses on AI governance specifically: the discipline that determines whether AI does what you intend, reliably, over time.

What AI Governance Actually Means

AI governance has three components: selection, design, and operation.

Selection happens before you build. What should AI handle? What must it never touch? Where are the boundaries?

Design happens as you architect. What controls ensure AI behaves as intended? What guardrails prevent it from crossing boundaries?

Operation happens as you run. Who is accountable for outputs? How do you verify it’s working? What happens when something goes wrong? How do you maintain reliability over time?

Guardrails Defined

Guardrails are the architectural constraints that prevent AI from doing things it shouldn’t. They come in several forms:

Topic restrictions that prevent the AI from addressing specific subjects

Business logic that handles critical decisions through deterministic algorithms, not LLMs

Output validation that checks results before they reach users

Human approval checkpoints at high-stakes decision points

Access controls that limit what data and systems the AI can reach

The key distinction: guardrails are built into the system. They’re not policies people are supposed to follow. They’re constraints the architecture enforces.

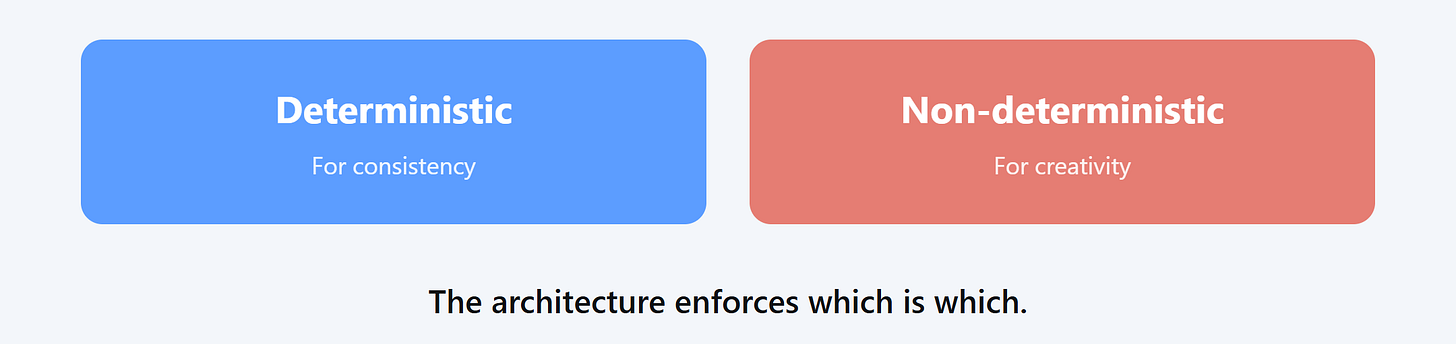

Deterministic vs. Non-Deterministic

This distinction is critical for AI governance.

Deterministic means the same input always produces the same output. A pricing algorithm that applies your rate card produces the same price every time for the same inputs. A tax calculation follows fixed rules. A compliance check against a defined policy yields a clear yes or no.

Non-deterministic means outputs can vary. LLMs are non-deterministic by design. Ask the same question twice, and get slightly different answers. This is a feature for creative tasks. It’s a risk for decisions that require consistency.

The governance principle: critical decisions that require consistency, auditability, and precision belong in deterministic systems. LLMs handle interpretation, generation, and judgment. The architecture enforces the boundary.

Example: Proposal pricing

A professional services firm uses AI to generate proposals. The AI drafts scope descriptions, writes executive summaries, and suggests approaches based on similar past projects.

Pricing does not go through the LLM.

The firm’s pricing follows a rate card with defined multipliers for complexity, timeline, and risk. This logic lives in a deterministic system. The AI cannot override, modify, or “interpret” pricing rules. When the proposal is assembled, pricing is determined by the algorithm, not the model.

If the LLM handled pricing, you’d get inconsistent quotes. The same project might price differently depending on how you phrase the prompt. A client could get a lower price by asking differently. The firm would have no audit trail for why a price was set.

Deterministic for consistency. Non-deterministic for creativity. The architecture enforces which is which.

Why This Matters Now

A chatbot that answers questions is different from AI that takes actions. Today’s AI systems can send emails, modify records, execute transactions, and communicate with third parties. This isn’t science fiction autonomous AI. It’s the conversational AI assistants that companies are deploying right now. When AI can act, the governance burden scales with capability.

Get AI governance wrong, and you face two outcomes:

Paralysis: nothing ships because nobody can agree on what’s safe

Exposure: things ship without real oversight, and you discover the problems after damage is done

EY found that 99% of organizations have experienced financial losses from AI-related risks, with an average loss of $4.4 million.5 The top dangers: non-compliance with regulations (57%), negative sustainability impacts (55%), and biased outputs (53%).

The Shift: Governance by Design

The companies moving fastest on AI didn’t skip governance. They made it everyone’s responsibility from the start.

Selection: Before You Build

Before development starts, answer these questions:

What should AI handle in this use case?

What must AI never handle?

What data will AI access?

What actions can AI take?

ING’s chatbot boundaries

ING built a customer-facing chatbot and deployed it to production in seven weeks.6 They didn’t skip governance. They evaluated 20 different risks for every use case upfront.

Selection was explicit: the chatbot would handle common customer service questions. It would never handle mortgage advice or investment recommendations. These weren’t guidelines. They were architectural boundaries.

The system couldn't give mortgage advice because the architecture prevented it. Not because a policy said so. Because the system couldn't do it.

The result: 20% more customers helped without agent intervention, deployed in seven weeks. Compare that to companies where governance committees meet quarterly to review projects that shipped months ago.

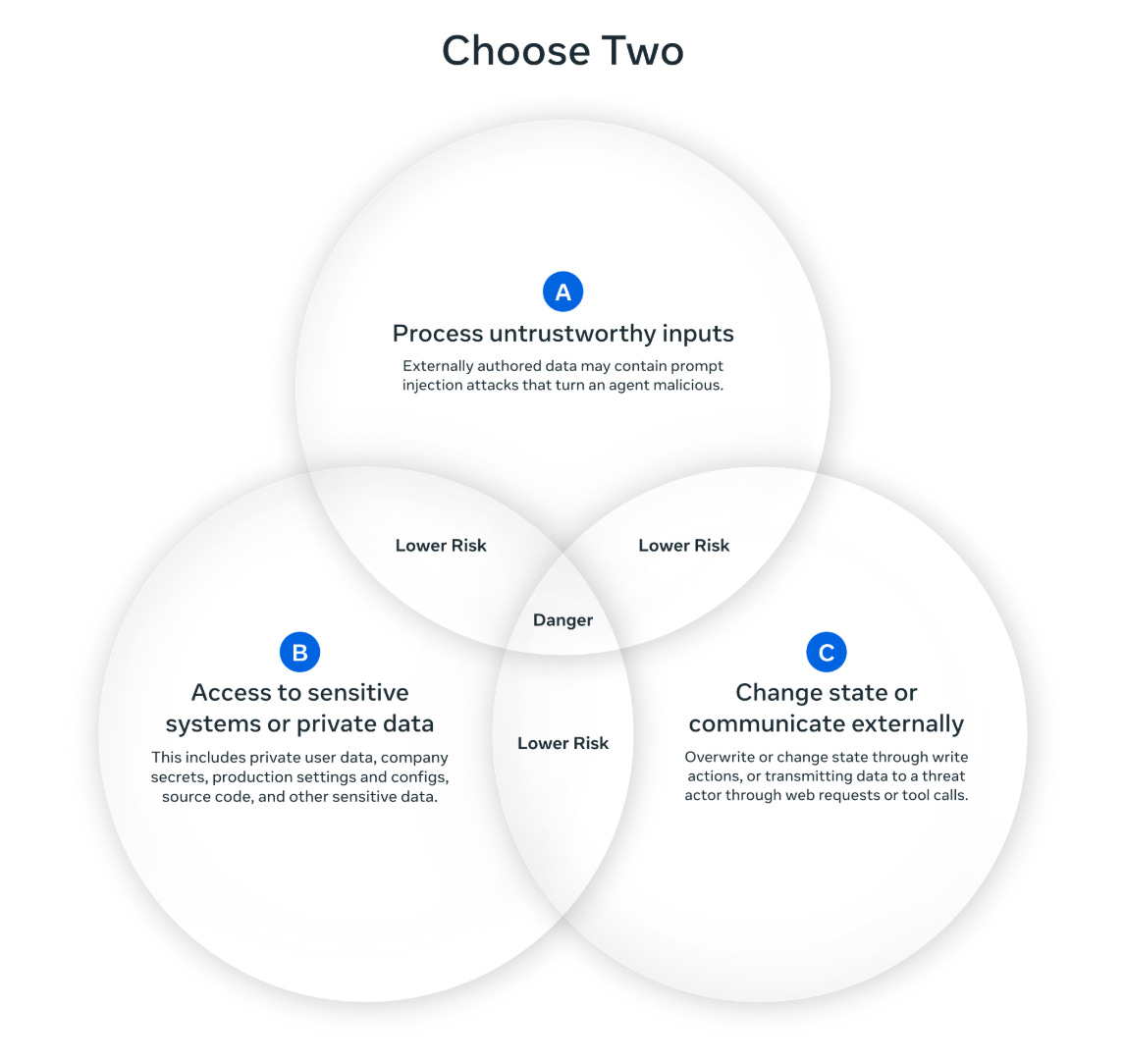

The Rule of Two

Meta’s security framework provides a useful constraint: “no agent should have access to all three of these within a single session.”7

Untrusted inputs (emails, customer data, external content)

Ability to take actions (send messages, modify systems, execute transactions)

Access to confidential or sensitive data

Having all three creates what Meta calls the “lethal trifecta.” A prompt injection attack can complete the whole chain: malicious input accesses private data and exfiltrates it to the attacker.

Design your AI so that no single agent crosses all three boundaries. If all three are required, require human approval before action.

Design: As You Architect

Critical decisions belong in deterministic systems. Non-deterministic LLMs handle interpretation and generation.

Compliance checking

A financial services firm uses AI to review documents for compliance issues. The AI reads contracts and flags potential problems.

The compliance rules themselves are deterministic. “Any contract over $1M requires CFO approval” is a rule, not a judgment call. The AI identifies that a contract is over $1M. The rule system determines which approvals are required.

What the AI handles: reading the contract, extracting the dollar amount, and identifying relevant clauses.

What deterministic logic handles: applying the compliance rules, determining required approvals, and generating the audit trail.

The AI can’t decide that a $1.2M contract “probably doesn’t need” CFO approval because it’s “pretty close” to the threshold. The architecture prevents it.

Quality gates in the workflow

ING built guardrails directly into its chatbot architecture. The system couldn’t give mortgage advice because the architecture prevented it. Not because a policy said so. Because the system couldn’t do it.

Quality gates belong in the workflow, not after it. Instead of reviewing finished outputs, check quality as work moves through the process:

Automated validation before outputs reach users

Human checkpoints at high-stakes decision points

Rejection before bad outputs cause damage

Governed capabilities: least privilege

Each AI component accesses only what it needs. No broad permissions. No “just in case” access. The architecture limits exposure.

Mastercard requires product owners to complete a scorecard before any AI system is built or a contract is signed.8 Their philosophy: “If risk might be present, treat it as though it is. And if you’re wrong, you’ll get that in control verification, because the team will give you evidence they mitigated the risk.”

Operation: As You Run

The human stays accountable for outputs. When AI augments a human, the human owns the result. This isn’t about blame. It’s about ensuring someone is paying attention.

MIT Sloan and BCG’s 2025 research found that 79% of advanced AI adopters invest in AI that augments human judgment, while only 54% invest in fully automated “AI decides and implements” systems.9 The companies seeing results keep humans in the loop.

Reliability over time

AI systems drift. Models degrade. Data distributions shift. What worked in month one may fail in month six.

Governance by design includes monitoring for reliability:

Track output quality over time, not just at launch

Establish thresholds that trigger review

Build retraining and adjustment into the operating model

Document what “good” looks like so you can detect when it changes

Mastercard’s AI governance team, which grew from one person to five, manages a portfolio of AI systems that doubled annually. They accomplish this through systematic monitoring, not heroic individual effort.

Everyone owns governance within their scope

Both ING and Mastercard used the exact same phrase, unprompted: “Governance as part of everyone’s job.”

This approach is the opposite of a governance committee. When governance is everyone’s job:

You don’t wait for committee approval to make obvious decisions

Risk assessment is distributed, not centralized

The person closest to the work makes the call within defined boundaries

Speed increases, not decreases

JoAnn Stonier, Mastercard Fellow: “Perhaps that’s because everybody in the company is more aware and digitally engaged now, and they see governance as part of everyone’s job.”10 Result: they allowed ChatGPT when other companies banned it, and “haven’t had any problems thus far.”

The Diagnostic

One question reveals where you stand:

“Where does governance live in your AI initiative?”

If the answer is “a committee,” “a review process after we build,” or “one person’s responsibility,” you’re bolting governance on. It will slow you down without reducing risk.

If the answer is “in the architecture, the business logic, and the workflow, owned by everyone involved,” you’ve designed governance in. It enables speed because the system itself enforces the boundaries.

Four Questions Before You Build

For your next AI initiative, answer these before development starts:

What should AI handle, and what must it never touch? Define the boundaries. Not as guidelines. As architectural constraints.

What decisions are deterministic vs. non-deterministic? Pricing, compliance rules, and approval thresholds go in code. The LLM handles interpretation and generation.

Where are the quality gates in the workflow? Identify checkpoints where problems get caught. Build them into the process, not after it.

Who is accountable for the output? Name a person. Not a committee. Not “the AI team.” A human who owns the result.

If you can’t answer all four, you’re not ready to build.

The Choice

The companies moving fastest on AI took governance most seriously. They didn’t create committees. They made it everyone’s job. They embedded governance in the architecture, not the org chart.

Governance by design doesn’t slow you down. It’s what lets you move fast.

ING deployed to production in seven weeks because they answered the governance questions upfront. The professional services firm that’s been working on a pilot for nearly a year is still trying to answer them after the fact.

Same technology. Opposite approaches. Different outcomes.

The choice is straightforward: bolt governance on after and watch it become theater, or design it in from the start and build something you can actually control.

Apply this framework to your company: ChatGPT | Claude | Perplexity

Next Week

Next issue: Once you’ve designed governance in, you need to actually build the thing. Discipline 5: Design.

Deloitte, “Governance of AI: A Critical Imperative for Today’s Boards (2nd Edition),” May 2025.

Menlo Ventures, “The State of Generative AI in the Enterprise,” 2025.

OWASP, “Top 10 for Agentic Applications,” 2026.

McKinsey/QuantumBlack, “Banking on Innovation: How ING Uses Generative AI to Put People First,” 2024.

Meta AI, “Agents Rule of Two: A Practical Approach to AI Agent Security,” October 2025.

Dataversity, “Case Study: Operationalizing AI Governance at Mastercard,” 2025.

MIT Sloan/BCG, “The Emerging Agentic Enterprise,” November 2025.

Dataversity, “Case Study: Operationalizing AI Governance at Mastercard,” 2025.